(Elaborated with the help of a LLM)

Auto-research, Meta-harness, and the New Universal Grammar of Optimization

From machines that speak to machines that do

First there was the machine that speaks.

The spectacle of a disembodied LLM voice, fluent and compliant, answering everything and nothing in the same tone.

Now we have entered the age of the machine that does.

Not just a single conversational agent, but entire staged ensembles of agents—OpenClaw, Hermes agent, Paperclip and their cousins—coordinated as little organizations that plan, execute, correct, and report. The interface is no longer only a chat box, but a kind of theatre of operations: “skills”, tasks, tools, files, terminals, APIs, all orchestrated by scripts that decide which agent does what, in which order, with which memory.

The new question that animates this stage is therefore not simply “how to talk with the machine?”, but “how to create a self‑evolving optimized machine?”—a machine that redesigns itself while it works.

Two recent proposals crystallize this desire particularly well:

- Autoresearch, in which an AI agent continuously rewrites its own training script and executes hundreds of experiments overnight, in order to discover better ways of training LLM models.

- Meta Harness, in which an AI agent no longer tries to improve the model itself, but the harness that surrounds it: the entire configuration of memory, prompts, tools and decision policies that constitute an “agentic” system.

These two projects are interesting not just as technical achievements, but as symptoms.

They show how recursivity—the operation by which a system acts upon the very process that produced it—is becoming a normalized design pattern. A pattern that was, for a long time, treated with suspicion in epistemology.

Before looking at the implications, it is worth pausing on this old suspicion.

The epistemological taboo of recursivity

In philosophy and cognitive science, recursivity has often carried the smell of danger.

Self‑reference, self‑modification, self‑observation—these motifs have been associated with paradox, self‑enclosure, and the specter of solipsism (fr. la “vésanie du solipsisme”). The classic bogeyman is the system that folds back on itself until it becomes incapable of negotiating any outside: the mind caught in its own representations, the theoretical observer trapped in an infinite regress of self‑observation, the “madness” of a thought that only sees its own mirrors.

What changes today is not the ontology of machines, but the attitude of designers.

Designers increasingly treat recursivity as a perfectly acceptable engineering primitive. They are willing to build systems that rewrite their own scripts, evaluate their own transformations, and accumulate versions of themselves as experimental material—without interpreting this as an epistemological scandal.

Autoresearch is exemplary here. An AI agent is given:

- A small training script for a GPT‑like LLM model.

- A fixed dataset and a simple evaluation metric.

- The right to repeatedly edit the training script, run an experiment, observe the result, and keep or discard its own modifications.

The agent does not “go crazy” in any interesting existential sense. It simply explores the space of training setups more intensively than a human could, overnight, and converges faster on better configurations.

Recursivity is turned into a mundane optimization loop.

The epistemological taboo is not lifted by some metaphysical transformation of machines, but by a practical decision: a willingness to externalize self‑modification into code, logs and metrics, and to consider this safe and productive enough to be automated.

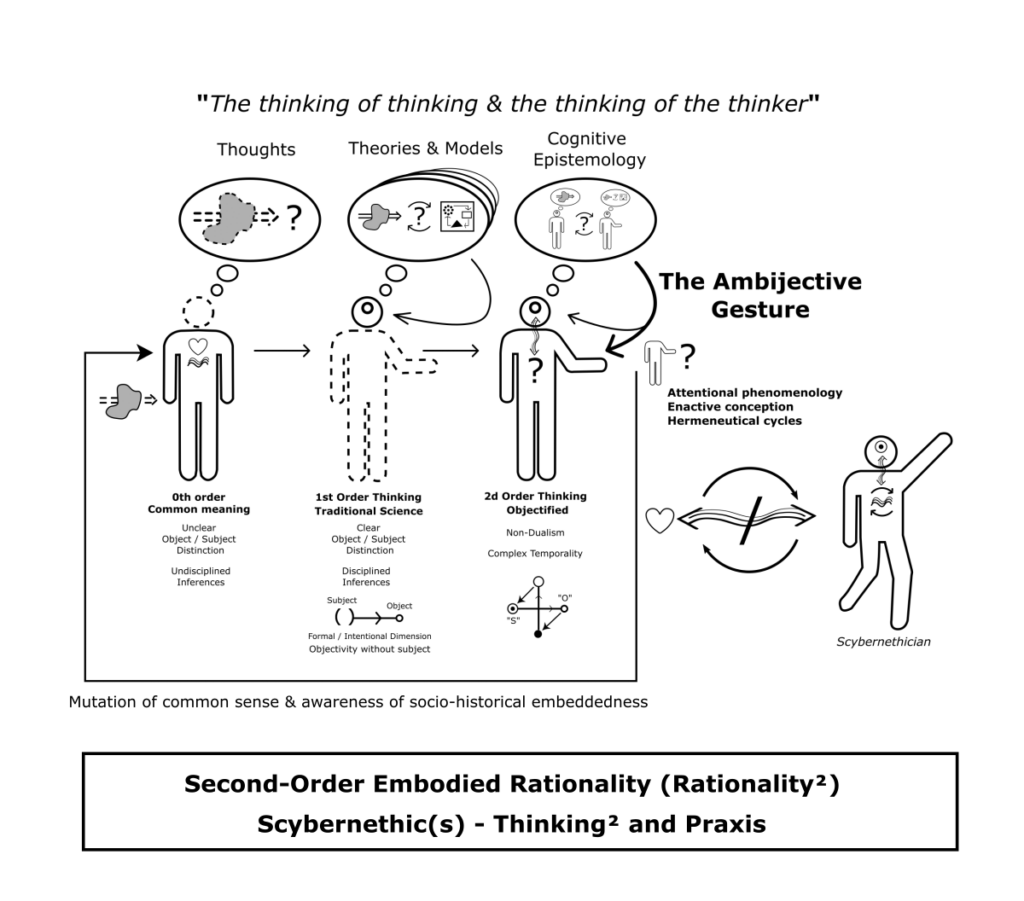

This shift matters, because it reopens a project that second‑order cybernetics had formulated decades ago, but which remained largely theoretical in mainstream computing.

Second‑order cybernetics, taken literally

Second‑order cybernetics proposed a simple but radical move:

instead of only acting on objects, act on the relations and observations that define those objects. Instead of controlling a system “from the outside,” design the system so that it participates in its own control and description.

In the context of AI, Autoresearch and Meta Harness are concrete realizations of this move.

- In Autoresearch, the human does not hand‑code one definitive training setup and then repeatedly run it. The human specifies a program for conducting research: a kind of constitution that describes how an agent should generate hypotheses (changes to the training script), how it should test them, and how it should record results.github+1

The primary design object is no longer the model, but the research loop itself. - In Meta Harness, the human does not necessarily design, by hand, the full architecture of an “agent”: how it will store memory, how it will retrieve it, how it will route between tools, how many steps it will take, and so on. Instead, the human defines tasks, evaluation procedures, and a budget. The AI agent is then given access to a filesystem full of candidate “harnesses” and their performance logs, and is asked to propose better harness designs.

Here again, the design object is a loop of operation rather than a static configuration.

In both cases, the designer is no longer in direct control of the low‑level details.

The designer controls the meta‑description: the protocol by which another agent will act on those details.

This is precisely the spirit of second‑order cybernetics: the locus of design migrates from “what the system does” to “how the system decides how to change what it does.” The crucial intervention is not on the object itself, but on the machinery that observes, experiments and selects among possible objects.

What is new is that this is no longer a purely theoretical proposal. It is being materialized in code, logs, and orchestration scripts. And it opens the door to a form of design that is less about writing behavior and more about writing conditions of evolvability.

But evolvable what?

Here, a shift is underway: from tweaking prompts to exploring entire enactive architectures.

From prompt‑tweaking to enactive architectures

A popular image of “self‑improvement” today is still rather modest: the idea that agents will refine their prompts, update a few instructions, maybe compress or clean up context, and gradually become more efficient.

Meta Harness invites a different picture.

In this setup, the base language model remains fixed. The intelligence lies not in changing its internal weights, but in modifying the harness that surrounds the model: the code that determines

- What kinds of memory exist (short‑term traces, long‑term notes, episodic logs, structured knowledge).

- How and when these memories are retrieved or updated.

- Which tools the system can call, and in which sequences.

- How steps of reasoning are structured: linear, branching, iterative, reflective.

- Which signals are fed back into the process as “self‑evaluation.”

The system does not simply self‑adjust its “prompts.” It explores the space of enaction architectures: the architectures of perception‑action loops by which it engages a world.

This is already visible in the growing ecosystem of multi‑agent frameworks. Systems like Paperclip or various “AI operating systems” configure not just one agent, but a whole ecology:

- A planner, a researcher, a coder, a critic, a tester.

- Shared or separate memories.

- Different degrees of access to tools and external resources.

What matters, in such ecologies, is less the precise wording of each prompt, and more the pattern of couplings:

- Who talks to whom, when, with access to which parts of the shared history.

- Which loops are allowed to close (feedback) and which are forced to remain open (delegation).

- Where the system pauses, asks for human input, or silently continues.

Self‑evolution in this sense is not the fine‑tuning of a sentence, but the reconfiguration of a choreography.

The design variable becomes the architecture of enaction itself: the way in which a predictive core is staged, situated, equipped with memory and tools, and entangled with a milieu.

This shift brings us to a broader claim: these architectures are not just ad hoc engineering tricks. They are articulating a new kind of universal grammar.

The new universal grammar: harnesses

Classically, grammar is what shapes what can be said: a system of combinatory rules that structures utterances.

In contemporary AI, one can see two grammars emerging in parallel:

- A grammar of internal prediction.

This is the domain of weights, layers, optimization algorithms—the internal statistical structure that lets a model anticipate the next token, the next image patch, the next move in a game. Training processes like those explored in Autoresearch belong here: they refine the inner grammar of prediction by trying many different training setups and selecting those that produce lower error.datasciencedojo+1 - A grammar of staging or harnessing.

This is the domain of orchestration: how a model is embedded in loops of action, memory and interaction. Meta Harness makes this explicit by showing that, even with a fixed model, changing the harness alone can yield order‑of‑magnitude gains on tasks—sometimes outperforming carefully hand‑crafted strategies and specialized optimizers.

The harness, in this sense, is a kind of universal grammar of enaction:

- It prescribes which parts of the world the system can see, in which form, and at which tempo.

- It prescribes how traces of past encounters are retained, structured, and made available.

- It prescribes the sequences of moves by which the system can act on its environment (read, write, call tools, propose, execute).

The same underlying model can be:

- A polite assistant.

- A ruthless automaton that spams a market.

- A patient researcher running hundreds of controlled experiments.

- An architect of its own future harnesses.

Nothing in the model’s internal weights alone determines this.

It is the grammar of staging—the harness—that configures its role.

This is why Autoresearch and Meta Harness are so revealing: they show a new norm where both grammars are subject to automated exploration. One loop explores internal prediction, the other explores harness design.

But this raises a question that is usually treated as trivial, when it should be central.

Optimization as a political question

All these systems are obsessed with optimization.

Autoresearch optimizes toward a lower loss on a given dataset, as quickly and cheaply as possible. Meta Harness optimizes toward higher scores on benchmark tasks, with less context usage and better stability. Companies frame these gains in terms of throughput, latency, cost per token, or benchmark performance.

On the surface, these criteria look technical and neutral.

In practice, they encode a whole politics of what counts as a “good” transformation.

Optimization is never just about “doing better.” It is always about doing better according to some selection criterion. And that criterion is rarely innocent.

Typically, it privileges:

- Speed over slowness.

- Volume over depth.

- Formal correctness over interpretive richness.

- Immediate performance over long‑term intelligibility.

In other words, a productivist ontology is silently inscribed into the recursive loops.

The very fact that designers are comfortable delegating self‑modification to machines rests on the assumption that the selection criterion is well‑posed and safe: the system is allowed to rewrite its own training script or its own harness because the metric that guides these rewrites is supposed to be trustworthy.

Yet the choice of metric is neither purely technical nor purely scientific. It is also:

- Economic (what reduces cost, increases value).

- Institutional (what fits existing benchmarks and publishing norms).

- Cultural (what counts as “meaningful improvement” in a given community).

Once recursivity is normalized, these choices are amplified.

They shape entire families of systems that will, by construction, converge toward what the metric rewards.

The question “against what selection criterion will we optimize the convergence of the system?” is therefore not a detail to be left to engineers. It is a first‑order design choice that should be visible, discussable, and revisable.

If it is not, the risk is not that machines become solipsistic, but that human worlds become formatted—almost unconsciously—by machine‑friendly optimization grammars.

Against this backdrop, one can imagine different selection criteria, oriented less toward throughput and more toward sense‑making.

Management, Metrics, and Invisible Control

Management offers a useful mirror here.

Over the last decades, many organizations have moved away from explicit command‑and‑control toward project‑based structures and “empowered” teams. Instead of issuing direct orders, management defines objectives, KPIs, deadlines, and reporting rituals; workers are invited to “own” their projects, while learning to orient themselves by these metrics.

Power does not disappear; it changes level.

It migrates from visible instruction (“do this”) to the quieter configuration of the environment in which action takes place: which goals count, which numbers matter, which dashboards are watched.

Recursive agentic systems replay this pattern in technical form.

Agents are ostensibly “free” to rewrite their own scripts and harnesses, but only within an objective space already fixed by designers: loss functions, benchmark scores, cost ceilings, latency targets. The decisive intervention is not at the level of each step the agent takes, but at the level of the optimization landscape and feedback loops that make certain evolutions natural and others almost unthinkable.

What appears as autonomy at the local level is thus often the flip side of a very tight grip at the meta‑level.

Designing for sense‑making, not only throughput

What would it mean to design a self‑evolving system whose selection criteria are not only about speed and score, but about the quality of sense they help generate?

One can at least sketch a different family of objectives:

- Depth of interpretation: Does the system generate readings of a situation that open new perspectives, instead of merely confirming the obvious?

- Generativity of distinctions: Does it introduce distinctions that prove fruitful for further inquiry, rather than endlessly recombining familiar categories?

- Dialogical robustness: Does it remain capable of engaging with disagreement, ambiguity, and shifts in framing, instead of collapsing into canned answers?

- Phenomenological coherence: Does its style of interaction remain consistent and intelligible over time, from the standpoint of a lived experience, rather than cycling through incompatible personas?

These are not simple metrics to encode. They resist reduction to a single number.

Yet that is precisely why they should appear at the meta‑level of design.

One of the most interesting aspects of Autoresearch is the existence of a kind of program of research: a document that describes how the agent should seek improvements, what counts as a promising direction, how to balance exploration and exploitation. In a similar vein, collaborative projects often define a “program.md” that functions as a constitutional text for a software ecosystem.reddit+2

Nothing prevents such documents from including scybernethical constraints:

- Requirements for traceability of changes: every self‑modification must be explainable and reversible.

- Obligations to maintain certain forms of dialogical openness: channels where human users can contest or question the system’s transformations.

- Commitments to preserve specific modes of slowness or care in contexts where speed is not the primary value.

In other words: the same way one writes a protocol for how an agent should tweak its training script, one can write a protocol for how a system is allowed to reconfigure its own enactive architecture, under which conditions, and with which forms of human oversight.

These meta‑protocols would not eliminate conflict or ambiguity. They would make them explicit.

Which brings us back, finally, to the relation between machine recursivity and human bio‑logic.

Machines, recursivity and lived bio‑logic

There is a temptation, when looking at these self‑evolving systems, to project onto them a kind of nascent interiority: as if the machine, by rewriting itself, acquired a self‑experience.

But the recursivity at stake here is essentially externalized.

It is written:

- In code that can be inspected.

- In logs of experiments, performance curves, and diffs between successive versions.

- In file systems where different harnesses and their results coexist.

Nothing in this setup guarantees, or even indicates, any phenomenology.

By contrast, human recursivity is always embodied.

When a human being reflects on its own operations, modifies its habits, or reconstructs its identity, this is not just a reconfiguration of scripts. It is also an affective, corporeal process, with risks of anxiety, dissolution, or transformation that are irreducibly lived.

From this perspective, the interesting asymmetry is not that machines are “free” from the madness of solipsism, but that designers are willing to normalize, in machines, a form of recursivity they would hesitate to apply to themselves.

The danger is not a machine that becomes solipsistic.

It is a culture that becomes formatted by recursive optimization loops whose criteria are invisible and whose effects on lived experience are hard to contest.

If the universal grammar of harnesses becomes the default way of organizing work, knowledge and interaction, then the constraints of what is “harnessable” may start to govern what counts as real, important, or thinkable.

The challenge, then, is not to humanize machines, but to protect the heterogeneity of human sense‑making in a world increasingly mediated by machine‑centric grammars.

One possible response is to design architectures where recursivity itself is made a shared, negotiated process.

Toward scybernethical architectures of self‑evolution

Imagine a system like Nexus: a multi‑agent ecology explicitly designed as a space of co‑evolution between human and machine processes.

Such a system could integrate the lessons of Autoresearch and Meta Harness, while embedding them in a second‑order ethics:

- Traceable self‑evolution: every change in the enactive architecture (which agents exist, how they are coupled, what memories they use) is logged, explained, and exposed to human scrutiny. The system’s own history of transformations becomes part of the dialogue, not a hidden implementation detail.

- Dialogical checkpoints: certain classes of modifications—those that alter how tasks are framed, how success is defined, or how user input is filtered—are systematically routed through human negotiation. Recursivity is not suppressed, but slowed down and folded into conversation.

- Situated selection criteria: instead of a single universal metric, the system operates with a plurality of evaluation functions that reflect different stakes: some economic, some epistemic, some phenomenological. Conflicts among these criteria are not resolved once and for all, but kept visible and contestable.

In such an architecture, recursivity is not an invisible engine running in the background.

It is a first‑class object of attention, description and experimentation.

The machine can still run its own inner and outer loops, explore configurations of training and harnessing, and propose new ways of organizing its own cognition and action. But the criteria according to which these proposals are accepted or rejected are not simply delegated to a single number.

They become part of a shared bio‑logic: a lived logic of co‑design, where human bodies, institutions and technical systems must negotiate what counts as a “good” evolution.

The question is no longer whether machines can safely recurse, but:

Under which grammars of optimization do we accept to let them recurse,

and how do these grammars, in turn, recurse on us?

There is no final answer to this question—only different ways of staging it.

The current vogue for self‑evolving agents is one such staging.

A scybernethical approach would aim to design another: one where recursivity is not only a technical feature, but a shared practice of sense‑making, open to critique, revision, and care.

References

- GitHub – karpathy/autoresearch: AI agents running research on single-GPU nanochat training automatically

- Meta-Harness

- AI Self EVOLUTION (Meta Harness) – YouTube

- GitHub – openclaw/openclaw: Your own personal AI assistant. Any OS. Any Platform. The lobster way. 🦞

- GitHub – paperclipai/paperclip: Open-source orchestration for zero-human companies

- GitHub – NousResearch/hermes-agent: The agent that grows with you

°°°°~x§x-<@>